Build, Measure, Trust.

T.R.U.S.S is a structured operating model for designing, measuring, and scaling trustworthy AI. It embeds Transparency, Reliability, User-centricity, Safety and Security into the AI lifecycle, turning trust into measurable infrastructure.

How T.R.U.S.S. Works

A Structured Operating Model for Trustworthy AI

T.R.U.S.S. follows a continuous cycle: implement controls, measure outcomes, and improve continuously. Each layer connects strategy to execution — making trust a system property, not a one-time audit.

Implement Controls

Embed structured trust mechanisms — patterns, guardrails, and safeguards — directly into the system architecture.

Measure Outcomes

Make reliability, safety, transparency, and security observable through pillar-level scorecards and KPIs.

Improve Continuously

Detect gaps, strengthen safeguards, and scale trust coverage with confidence across your AI portfolio.

Pattern Library

AI Without a Trust Framework Is a Business Risk

AI features are often shipped without structured controls for reliability, safety, transparency, and security.

Trust requires repeatable patterns that prevent failure, make behavior observable, and embed safeguards from the start.

Delivery Framework

How T.R.U.S.S. Comes Together

T.R.U.S.S. organizes AI trust into five connected stages: from defining your strategy and implementing patterns, to measuring outcomes, enabling teams, and running day-to-day operations. Each stage plays a clear role in building AI you can stand behind.

Training & Adoption

Enablement & cross-functional teams

- Readiness assessments

- Workshops

- Shared vocabulary

- Change alignment

Sokuvo™

Teams operationalizing AI delivery

- Trust-aware product layer

- Delivery intelligence

- Workflow integration

- Architecture alignment

Observability

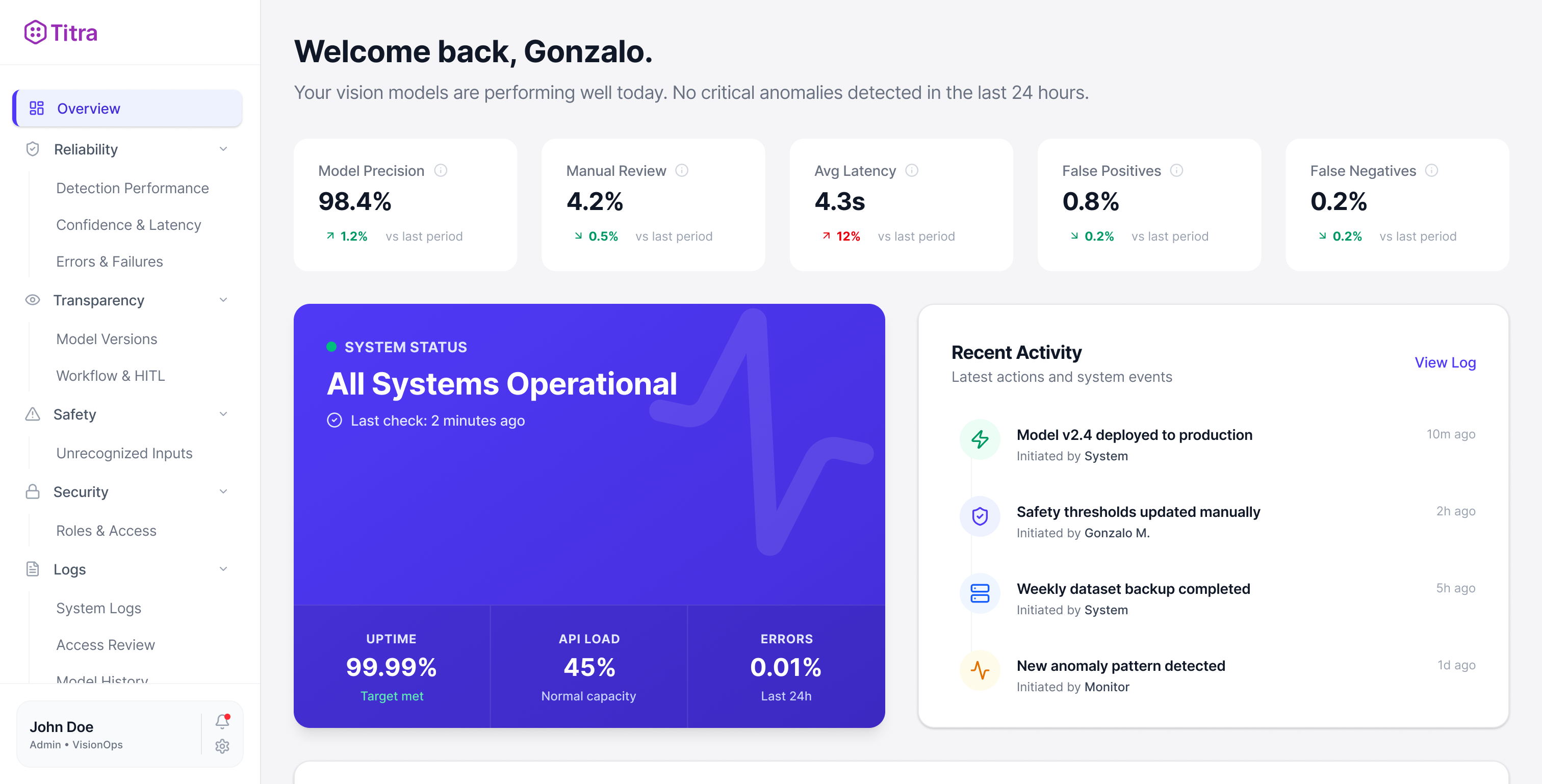

Every Pattern Is Measurable

Trust patterns emit KPIs that roll up into pillar-level scorecards and executive dashboards — giving you real-time visibility into AI system health.

Real-time trust scoring, drift detection, and pattern analytics — all in a single executive dashboard.

Explore the dashboard